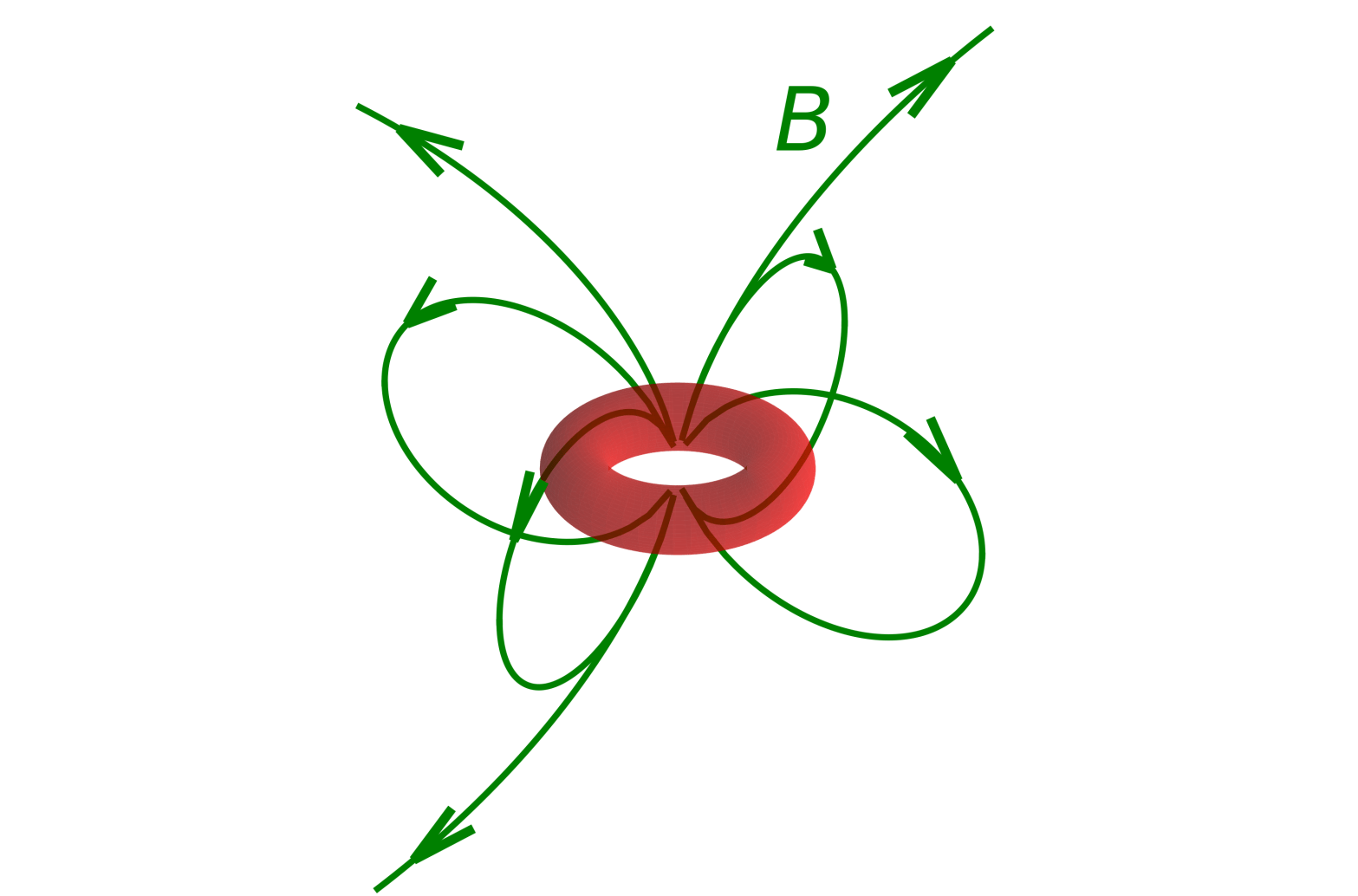

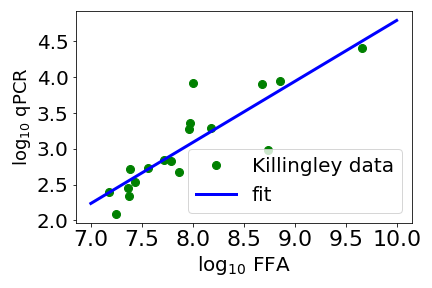

Large Language Models (LLMs) like OpenAI’s ChatGPT, Google’s Gemini, Anthropic’s Claude and Microsoft’s Copilot are very topical nowadays, and they can all write Python code. Up till the last academic year*, I had a coursework element for my biological physics teaching that was basically to chose variables correctly and then to fit a straight line to noisy data**. I did this partly because data fitting is such a useful skill that I thought using coursework to push students into practicing it, would help them – not sure the coursework was that popular but I contend it taught useful skills.

The students’ performance was mixed, and I was curious to see if ChatGPT et al could do better, given a good prompt. ChatGPT itself couldn’t (at first go), but Gemini’s, Claude’s and Copilot’s code was correct.